By James Redden; Molly O'Donovan Dix

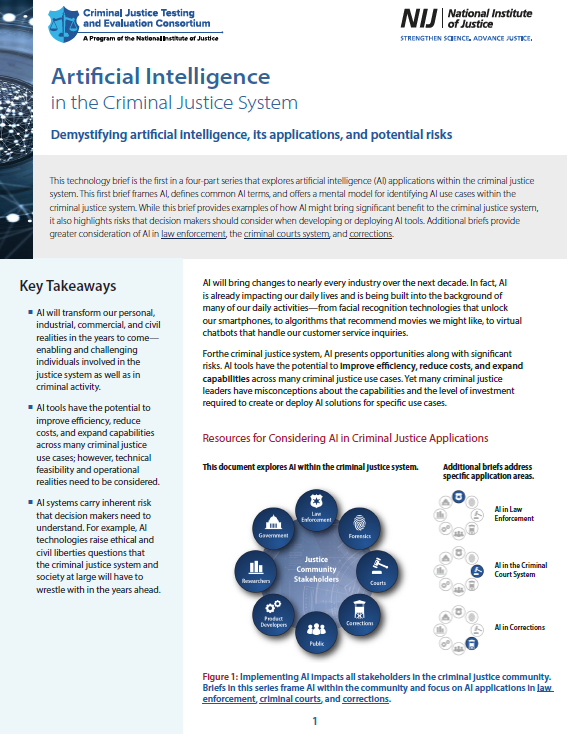

This technology brief is the first in a four-part series that explores artificial intelligence (AI) applications within the criminal justice system. This first brief frames AI, defines common AI terms, and offers a mental model for identifying AI use cases within the criminal justice system. While this brief provides examples of how AI might bring significant benefit to the criminal justice system, it also highlights risks that decision makers should consider when developing or deploying AI tools. Additional briefs provide greater consideration of AI in law enforcement, the criminal courts system, and corrections.

Key Takeaways ¡ AI will transform our personal, industrial, commercial, and civil realities in the years to come— enabling and challenging individuals involved in the justice system as well as in criminal activity. ¡ AI tools have the potential to improve efficiency, reduce costs, and expand capabilities across many criminal justice use cases; however, technical feasibility and operational realities need to be considered. ¡ AI systems carry inherent risk that decision makers need to understand. For example, AI technologies raise ethical and civil liberties questions that the criminal justice system and society at large will have to wrestle with in the years ahead. AI will bring changes to nearly every industry over the next decade. In fact, AI is already impacting our daily lives and is being built into the background of many of our daily activities—from facial recognition technologies that unlock our smartphones, to algorithms that recommend movies we might like, to virtual chatbots that handle our customer service inquiries. Forthe criminal justice system, AI presents opportunities along with significant risks. AI tools have the potential to improve efficiency, reduce costs, and expand capabilities across many criminal justice use cases. Yet many criminal justice leaders have misconceptions about the capabilities and the level of investment required to create or deploy AI solutions for specific use cases

Research Triangle Park, NC:RTI International., .

2020. 10p.